Vm Workstation Error Reading Volume Information Map Virtual Disk

VM hardware resource

Each Virtual Machine is a collection of resources provided by the infrastructure layer, normally organized in a pool of resources and assigned dynamically (or in some instance statically) to each VM.

Each VM "run into" a subset of the physical resources in a form of a virtual hardware components divers usually past the following minimum elements:

- Hardware platform type (x86 for 32 bit VM or x64 for 64 bit VM)

- Virtual Hardware blazon (depending by the virtualization layer)

- Virtual CPU (and mayhap virtual sockets and virtual cores)

- Virtual RAM (and perchance too a Persistent RAM)

- Virtual disk continued to a virtual controller

- Virtual NIC

And so there can exist also boosted hardware components, that maybe are non mandatory, but maybe are useful on specific apply cases. Or are needed for some basic operations, like, for example, installing the guest Os where a video driver, a keyboard and a mouse device are needed to use the remote console.

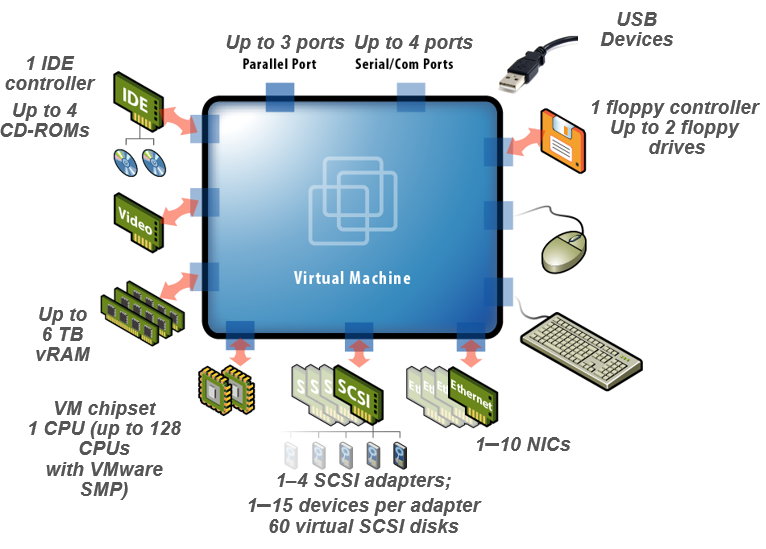

On VMware vSphere it'southward possible add a lot of hardware devices to a VM:

There are also the PCI devices, other SCSI device, the virtual FC ports, and several other type of storage devices (like NVMe and SATA based).

Recently also virtual GPU are becoming more popular as well on some server workload, due to the needs of specific computational tasks that are ameliorate performed on full general purpose GPU, instead of a general-purpose CPU (annotation that vSphere 6.7U1 add the ability to vMotion VMs with virtual GPU).

VM hardware resources hot changes

But which kind of resources is possible to modify on a running VM?

This really depends by the hypervisor and its version (and sometimes also by its edition), but in that location are a lot of possibility.

The following table summarize the different operations on a running VM with a "recent" guest OS:

| Type of resource | Hot-Increasing the resource | Hot-Decreasing the resource |

| CPU (socket) | Could be possible | Could be possible |

| CPU (core) | Non possible due to Bone limitations | Non possible due to OS limitations |

| RAM | Could exist possible | Could be possible simply usually non implemented |

| Disk (device) | Could be possible (not on IDE devices) | Could be possible (not on IDE devices) |

| Disk (space) | Could exist possible (non on IDE devices) | Could exist possible (not on IDE devices) but usually not implemented |

| Storage controller | Could be possible | Could be possible but ordinarily not implemented |

| Network controller | Could be possible | Could be possible |

| PCI device | Could exist possible | Could be possible but commonly not implemented |

| USB device | Could be possible | Could exist possible |

| Serial or parallel device | Non possible | Not possible |

| GPU full general purpose | Could be possible | Could exist possible but ordinarily not implemented |

At present let's provide more than information well-nigh some of the different resources and some tips and notes near how changing the hardware resources on a running VM.

To make the case much clear and specific, we volition consider a VM running on vSphere 6.7 Update 1.

Virtual CPU hot-add and hot-remove

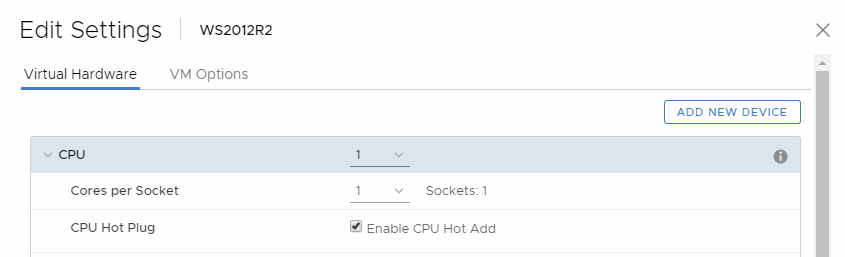

By default, you lot cannot add together CPU resources to a virtual machine when the virtual machine is turned on. The CPU hot add option lets you lot add together CPU resources to a running virtual auto.

There are some requisites to enable the CPU hot add together option, you need to verify that the VM is running and is configured as follows:

- Virtual machine is turned off.

- Virtual machine compatibility is ESX/ESXi 4.10 or later.

- Latest version of VMware Tools installed.

- Guest operating organisation that supports CPU hot plug (basically have a "recent" Os, for Windows from Windows Server 2008 or Vista).

To enable the CPU hot add option:

- Right-click a virtual car in the inventory and select Edit Settings.

- On the Virtual Hardware tab, expand CPU, and select Enable CPU Hot Add.

- Click OK.

Now you can hot-add together a new socket (or more than) to a running VM. Annotation that is possible only hot-add together socket with the same number of cores of the existing sockets… The number of cores cannot exist changed on a running VM.

On Windows Server Datacenter edition it's as well possible hot-remove the sockets, for other OSes you lot demand to shutdown the VM to remove the sockets.

This characteristic is available only with Standard or Enterprise Plus editions and still is 1 of the distinct features of vSphere compared to other hypervisors (actually Microsoft Hyper-V does not implement it, only for example Nutanix AHV already has this feature).

At that place are some notes and suggestion for manage CPU hot-add (and remove):

- For all-time results, use virtual machines that are compatible with ESXi 5.0 or after.

- Hot-adding multi-core virtual CPUs is supported only with virtual machines that are compatible with ESXi 5.0 or afterward.

- To apply the CPU hot plug feature with virtual machines that are uniform with ESXi iv.x and later, ready the Number of cores per socket to one.

- Virtual NUMA piece of work correctly but with vSphere 6.x when you hot-add a new socket.

- Not all guest operating systems back up CPU hot add. You tin disable these settings if the guest is not supported. See also VMware KB 2020993 (CPU Hot Plug Enable and Disable options are grayed out for virtual machines)

- You can have some small-scale issues when you are hot-adding a CPU to a VM with a unmarried CPU, for case on Windows organisation the task manager does not show the effect immediately… just close and open up it again.

- Test the process on the VM before put it in product… there are some Windows Server 2016 builds that may stops with a BSOD during a CPU hot-add (run into https://vinfrastructure.it/2018/05/windows-server-2016-reboot-after-hot-adding-cpu-in-vsphere-6-5/, simply same tin happen also on vSphere 6.7).

- Adding CPU resource to a running virtual machine with CPU hot plug enabled disconnects and reconnects all USB passthrough devices that are connected to that virtual machine.

- Hot-adding virtual CPUs to a virtual machine with NVIDIA vGPU requires that the ESXi host take a free vGPU slot.

Virtual RAM hot-plug

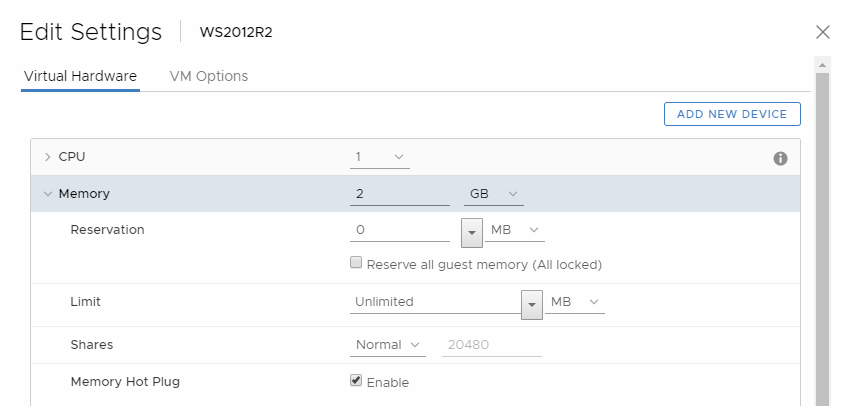

Retentivity hot add lets you add memory resources for a virtual machine while that virtual motorcar is turned on.

At that place are some requisites to enable the RAM hot plug option, you lot demand to verify that the VM is running and is configured as follows:

- Virtual car is turned off.

- Virtual machine compatibility is ESX/ESXi 4.x or later.

- Latest version of VMware Tools installed.

- Guest operating arrangement that supports CPU hot plug (basically take a "recent" OS, for Windows from Windows Server 2008 or Vista, only also some edition of Windows Server 2003 are supported).

- For a ameliorate Windows Os support tabular array, run into this web log postal service: https://www.petenetlive.com/KB/Commodity/0000527

To enable the RAM hot plug pick:

- Right-click a virtual motorcar in the inventory and select Edit Settings.

- On the Virtual Hardware tab, aggrandize Retention, and select Enable to enable adding memory to the virtual motorcar while it is turned on.

- Click OK.

This feature is bachelor only with Standard or Enterprise Plus editions and permit merely to hot-add RAM to a running VM. Annotation that this feature information technology'due south now implemented besides in other hypervisors (for example in Microsoft Hyper-5 starting with 2016 and later it's possible add and also remove RAM).

In that location are some notes and suggestion for manage RAM hot-add:

- For best results, utilise virtual machines that are compatible with ESXi 5.0 or later on.

- Enabling memory hot add produces some retentivity overhead on the ESXi host for the virtual machine

- Not all guest operating systems support RAM hot add. You can disable these settings if the guest is not supported.

- Hot-calculation retention to a virtual machine with NVIDIA vGPU requires that the ESXi host have a free vGPU slot.

- Virtual NUMA work correctly only with vSphere 6.x when you hot-add together a new socket.

- Test the process on the VM before put it in production… I haven't noticed any big issue (like with the CPU hot-add), but always examination your features on a non-critical environment.

Virtual disks

When you create a virtual machine, a default virtual hard disk is added. You lot can add another hd if you run out of disk infinite, if you want to add a kicking disk, or for other file direction purposes.

In VMware vSphere you lot can hot-add and hot-remove a virtual deejay, and also hot-add space to existing virtual disks. For RDM disks there tin can be some limitations, only we don't consider this kind of disks in this commodity.

Most of those operations and features are bachelor also in other hypervisors, on ESXi they are available since version 3.x and besides for the complimentary edition!

In vSphere, disk space can only be added to existing disks. The shrink option to reduce a virtual disk size (bachelor for example on Microsoft Hyper-5) it's not available on vSphere.

Note that all the hot-add features are bachelor only when virtual disks are continued to SCSI or SATA or SAS controller. On IDE controller those features practice not work due to IDE/PATA limitations.

The maximum number of disks depends by the controller type. Actually nosotros can take:

- Max four virtual disks on IDE controllers

- Max 60 virtual disks on SCSI/SAS controllers (up to 4 controllers)

- Max 60 virtual disks on NVMe controllers (up to 4 controllers)

- Max 120 virtual disks and/or CDROM devices on SATA controllers (upwardly to 4 controllers)

- Max 256 virtual disks on PVSCI controllers (up to iv controllers) new in vSphere half dozen.7

The maximum value for big chapters difficult disks is 62 TB. When you add or configure virtual disks, always leave a modest amount of overhead. Some virtual auto tasks tin can quickly eat large amounts of deejay space, which can prevent successful completion of the task if the maximum disk space is assigned to the disk. Such events might include taking snapshots or using linked clones. These operations cannot cease when the maximum amount of deejay infinite is allocated. Also, operations such as snapshot quiesce, cloning, Storage vMotion, or vMotion in environments without shared storage, can accept significantly longer to finish.

Virtual machines with large capacity virtual hard disks, or disks greater than ii TB, must meet resource and configuration requirements for optimal virtual machine functioning.

Historically those "jumbo" disks accept got some limitations in vSphere (for more data see https://vinfrastructure.it/2017/02/jumbo-disk-vmware-esxi/), just starting with vSphere 6.5 almost limitations are gone.

VMs with large capacity disks have the following conditions and limitations:

- The guest operating system must support big capacity virtual difficult disks and must utilise a GPT table (MBR table are limited to 2TB).

- You lot can move or clone disks that are greater than two TB to ESXi half-dozen.0 or later hosts or to clusters that accept such hosts available.

- Yous can hot-add space to a disk that is greater than two TB only from vSphere vi.5 and afterwards.

- Fault Tolerance is not supported.

- BusLogic Parallel controllers are not supported.

- The datastore format must be one of the post-obit:

- VMFS5 or later

- An NFS volume on a Network Attached Storage (NAS) server

- vSAN

- Virtual Wink Read Cache supports a maximum hard disk size of 16 TB.

VSAN from StarWind is software-defined storage (SDS) solution created with restricted budgets and maximum output in heed. It pulls close to 100% of IOPS from existing hardware, ensures high uptime and mistake tolerance starting with simply 2 nodes. StarWind VSAN is hypervisor and hardware agnostic, allowing you to forget almost hardware restrictions and crazy expensive concrete shared storage.

Build your infrastructure with off-the-shelf hardware, scale all the same yous similar, increase return on investment (ROI) and savor Enterprise-class virtualization features and benefits at SMB price today!

GPU

If an ESXi host has an NVIDIA GRID GPU graphics device, you lot can configure a virtual auto to use the NVIDIA Filigree virtual GPU (vGPU) technology.

NVIDIA Grid GPU graphics devices are designed to optimize complex graphics operations and enable them to run at high performance without overloading the CPU. NVIDIA GRID vGPU provides unparalleled graphics functioning, price-effectiveness and scalability past sharing a single physical GPU among multiple virtual machines as split up vGPU-enabled passthrough devices.

In that location are some requisites to support the virtual GPU:

- Verify that an NVIDIA Grid GPU graphics device with an appropriate commuter is installed on the host. See the vSphere Upgrade documentation.

- Verify that the virtual machine is uniform with ESXi half dozen.0 and later on.

To add together the GPU:

-

- Correct-click a virtual machine in the inventory and select Edit Settings.

- Right-click a virtual auto and select Edit Settings.

- On the Virtual Hardware tab, select Shared PCI Device from the New device drop-down bill of fare.

- Click Add together.

- Expand the New PCI device, and select the NVIDIA Filigree vGPU passthrough device to which to connect your virtual machine.

- Select a GPU profile.

A GPU contour represents the vGPU type. - Click Reserve all retention.

- Click OK.

Source: https://www.starwindsoftware.com/blog/changing-the-hardware-resources-on-a-running-vm

0 Response to "Vm Workstation Error Reading Volume Information Map Virtual Disk"

Post a Comment